How to Connect an LP-Research IMU to ROS (Update)

Introduction

This article describes how to connect an LP-RESEARCH inertial measurement unit (IMU) using a Robot Operating System (ROS) node. We are happy to announce that our IMU ROS sensor driver has been accepted into the official ROS package repository. The Robot Operating System, or ROS in short, is an open-source de-facto standard for robotics sensing and control.

With the package openzen_sensor now provided as part of the ROS distribution Melodic Morenia it just became a whole lot easier to use our sensors in robotic applications.

Note: This article covers our node for ROS 1. Please see further information regarding our ROS 2 node at the end of this article. This post is a follow-up to our previous ROS driver release.

Published ROS Topics

These are the ROS topics which are published by the OpenZen ROS driver:

Message

Type

Description

/imu/data

Inertial data from the IMU. Includes calibrated acceleration, calibrated angular rates and orientation. The orientation is always unit quaternion.

/imu/nav

Global position from a satellite navigation system. Only available if the IMU includes a GNSS chip.

/imu/is_autocalibration_active

Latched topic indicating if the gyro autocalibration feature is active.

Installation of the LPMS ROS Driver

All that’s needed is to install the package openzen_sensor via your Linux distribution’s package manager. In Ubuntu, with the ROS Melodic Morenia distribution installed, use the following command:

|

1 |

apt install ros-melodic-openzen-sensor |

Once the IMU ROS driver package is installed, we use the following command to start the OpenZen node:

|

1 |

rosrun openzen_sensor openzen_sensor_node |

This will automatically connect to the first available IMU and start streaming its accelerometer, gyroscope and magnetometer data to ROS. If your sensor is equipped with a GPS unit, global positioning information will also be transferred to ROS.

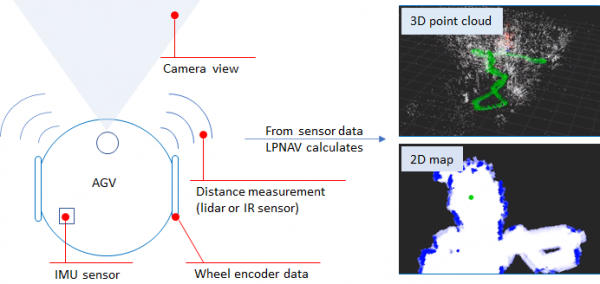

Once a sensor has been connected via the motion sensor driver, the data from the sensor is exported via ROS topics which can be consumed by other ROS components such as a navigation and path planning system.

Outputting IMU sensor values on the command line can now be easily done with:

|

1 |

rostopic <span class="nb">echo</span> /imu/data |

and the data can be plotted with:

|

1 |

rosrun rqt_plot rqt_plot /imu/data/linear_acceleration |

More information on the usage of the OpenZen IMU ROS driver can be found in the repository of the driver.

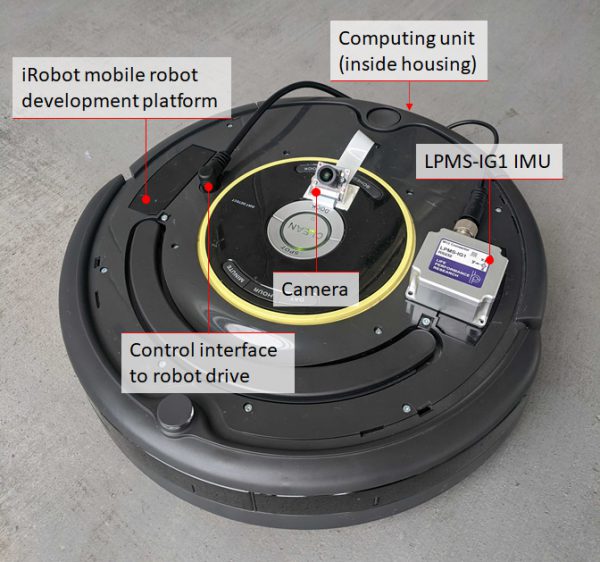

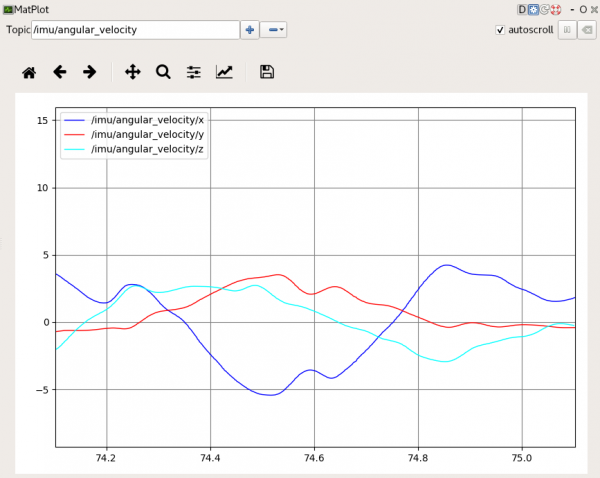

The image above shows an angular velocity output graph in the ROS MatPlot application from an LPMS-IG1 sensor.

ROS 2 Release

We have recently released a ROS 2 version of our OpenZEN ROS node. The node is not part of an official ROS2 release yet, but it works well on the latest release Foxy. For surther information and source code see the OpenZenROS2 repository.